Context Windows Are Finite: The System I Built to Keep BittsAI Chats Coherent

•

6 min read

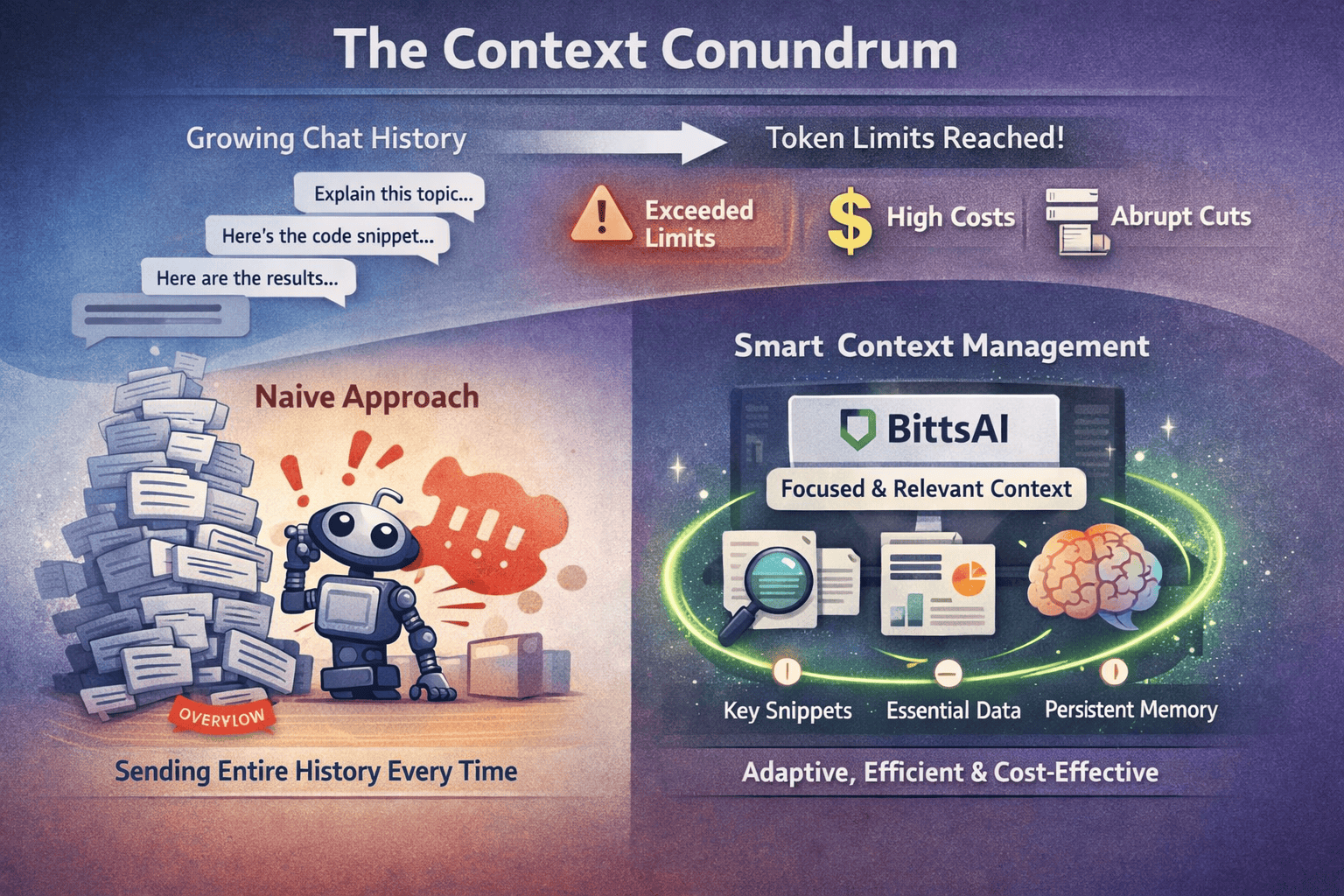

The context conundrum

Every LLM conversation eventually hits a wall. History grows, explanations lengthen, users paste additional snippets, and tool outputs add noise. Yet models have inherent, fixed limits on how many tokens they can process. If you naively send the entire history every time, you eventually hit a wall, exceeding token limits, spiking costs, or suffering abrupt truncations that drop the very details your user cares about. For BittsAI, an AI powered learning platform, I needed a more agile system. I built a context manager that keeps the “working set” small and relevant, preserving long-term continuity without sacrificing performance during deep study sessions.

Design goals

With BittsAI, I wanted context management to be predictable, easy to implement and user-first. This vision required three core guarantees:

- Adherence to the token budget: Always respect the predefined limits.

- Recency bias: Keep the most recent conversation turns available to the model.

- Intelligent trimming: Never lose important history when compression becomes necessary.

Instead of letting the model hit a hard limit, I treated the entire context as a dynamic budget. This allowed me to proactively compress older parts of the conversation into a running incremental summary, managing memory gracefully.

Architecture overview

At its core, the ContextManager transforms an unbounded chat history into a predictable, right-sized payload.

Lifecycle of a request

- State retrieval: Pull messages from Supabase and grab the latest summary.

- Budgeting: Calculate remaining tokens after the system prompt and headroom.

- Optimization path:

- Under budget: The fast path, sends the history as is.

- Over budget: Apply a recency window and roll everything else into an incremental summary.

- Persistence: Saves the updated summary and tags summarized messages metadata with isSummarized set to true to avoid reprocessing on later turns.

- Fallback: If optimization fails, it falls back to simple recency-based truncation so the user still gets a response.

Why summarization over RAG?

When building the ContextManager, RAG (Retrieval Augmented Generation) was an option. RAG uses vector embeddings and similarity search to retrieve relevant messages, which can surface specific facts from long conversations. For BittsAI, I chose incremental summarization for simplicity and fit. Here is why:

- Infrastructure: RAG requires managing vector databases and embedding pipelines; Summarization uses existing LLM and SQL database.

- Operational Cost: RAG adds latency for similarity searches; Summarization adds a single background LLM call to update the history.

- Best Use Case: RAG is for exact fact retrieval from massive datasets; Summarization is for maintaining conversational narrative.

RAG would make sense if:

- Users frequently reference specific facts from hundreds of messages ago

- You need exact quotes or citations from earlier in the conversation

- The conversation includes many long form documents that need precise retrieval

When summarization works better:

- When conversation flow and recent context matter most

- When you want to minimize infrastructure complexity

- When cost and latency are concerns (one summary call vs. embedding + retrieval)

- When the conversation is naturally sequential rather than document heavy

For BittsAI, summarization provides the right balance of simplicity and effectiveness. The system maintains coherent context without the operational overhead of vector infrastructure, while still preserving the essential information needed for meaningful learning conversations.

Token accounting

Accurate token counting is essential for budget decisions, but re-tokenizing every message on every request is slow. The system avoids this by caching token counts when messages are saved. When a user sends a message, the route handler calculates inputTokens using gpt-tokenizer and stores it in message.metadata.inputTokens. When the model finishes streaming, the AI SDK's onFinish event provides usage data including outputTokens, inputTokens, totalTokens, reasoningTokens, and cachedInputTokens, which are persisted to the assistant message's metadata. The ContextManager then uses a priority system: it trusts these cached token counts first, and only falls back to re-tokenization when metadata is missing. This keeps most requests fast while staying accurate when cached counts aren't available.

The threshold

The ContextManager triggers summarization at 80% of the model's context window, not 100%. The 20% safety margin leaves headroom for the model's response, and accounts for token counting variance. If the total token count (messages + system prompt) is under this threshold, the system returns everything as-is which is the fastest path that avoids database queries and summarization calls. Once over 80%, it switches to the optimization path: enforcing the budget, summarizing older messages, and building the compressed context. This threshold balances keeping full context when possible and proactively compressing before hitting limits.

Here's the threshold calculation:

typescript

/**

* Calculate the threshold at which we trigger summarization.

* Set to 80% of context window to leave room for response and avoid hard cutoffs and account for token calculation variance.

*/

private calculateSummaryThreshold(): number {

return Math.floor(this.config.contextWindow * SUMMARY_THRESHOLD_PERCENTAGE);

}And the fast path check:

typescript

// If under threshold, return all messages without summarization

if (totalTokens <= summaryThreshold) {

return {

originalMessages: allMessages,

messages: allMessages,

summary: '',

totalTokens,

truncatedCount: 0,

};

}

Budget enforcement

Once the threshold is crossed, the system enforces the token budget before summarizing. The enforceTokenBudget method starts with the existing summary and the new user message as the minimum required payload, then works backwards from the newest recent messages, adding each one if it fits. Messages that don't fit are marked as "dropped" and will be included in the summary. This ensures nothing is lost: dropped recent messages are preserved in the summary rather than discarded.

Here's the budget calculation:

typescript

/**

* Calculates available token budget for messages.

* Budget = contextWindow - maxOutputTokens - systemPromptTokens

*/

private getAvailableBudget(): number {

return this.config.contextWindow - this.config.maxOutputTokens - this.config.systemPromptTokens;

}And the enforcement logic:

typescript

private enforceTokenBudget(

recentMessages: ChatMessage[],

summaryTokens: number,

newMessage: ChatMessage

): { fitting: ChatMessage[]; dropped: ChatMessage[] } {

const availableBudget = this.getAvailableBudget();

const newMessageTokens = this.getMessageTokens(newMessage);

// Start with minimum required, summary + new message

let currentTokens = summaryTokens + newMessageTokens;

// If even the minimum doesn't fit, return all as dropped

if (currentTokens > availableBudget) {

return { fitting: [], dropped: recentMessages };

}

// Add recent messages from newest to oldest until budget exhausted

const fitting: ChatMessage[] = [];

const dropped: ChatMessage[] = [];

for (let i = recentMessages.length - 1; i >= 0; i--) {

const message = recentMessages[i];

const messageTokens = this.getMessageTokens(message);

if (currentTokens + messageTokens <= availableBudget) {

fitting.push(message);

currentTokens += messageTokens;

} else {

// This message and all older ones don't fit

// Collect dropped messages (from index 0 to i inclusive)

for (let j = 0; j <= i; j++) {

dropped.push(recentMessages[j]);

}

break;

}

}

// Restore order, oldest first

fitting.reverse();

return { fitting, dropped };

}The key insight is that budget enforcement happens first, before summarization. This determines which recent messages fit in context, and those that don't fit are combined with older unsummarized messages for summarization. This preserves continuity: even if a recent message is too large to keep verbatim, its content flows into the summary.

Summarization workflow

Following budget enforcement, the system combines older unsummarized messages with any dropped recent messages. These are merged and the summary is generated incrementally. If an existing summary exists, the model updates it by integrating new information and removing outdated content. This keeps the summary compact (targeting 200–400 tokens) while preserving important context. After generation, the summary is persisted to Supabase.

The summary generation uses fast and affordable models with a prompt optimized for educational conversations. It prioritizes study topics, knowledge levels, and key clarifications while excluding pleasantries and redundant information. The incremental approach means each summary update builds on the previous one, keeping the token count manageable even as conversations grow.

Persistence and drift control

After generating a summary, the system persists it to Supabase and marks messages as summarized. This two-step process can drift if one step fails: messages could be marked as summarized while the summary isn't saved, or vice versa. To reduce drift, the summary upsert retries once on failure. If it still fails after retry, the error is logged but doesn't block the response; the next request will regenerate the summary if needed. When marking messages as summarized, the system uses batched parallel updates with Promise.allSettled to handle partial failures gracefully.

Graceful degradation

Even with retries and careful error handling, failures can occur. Database timeouts, summarization API errors, or edge cases where the summary plus the new message alone exceeds the budget. The ContextManager includes a fallback path that ensures the user always gets a response. If the optimized path fails at any point, it falls back to simpleRecencyTruncation: a straightforward algorithm that works backwards from the most recent messages, adding each one to the context until the budget is exhausted. This fallback preserves any existing summary if it fits, and uses cached data when available to avoid redundant database calls. The result is a response that may lose some older context, but still provides a coherent reply based on recent conversation.

typescript

/**

* Simple recency-based truncation fallback.

* Keeps as many recent messages as fit within the token budget.

* Used when optimized context building fails.

*/

simpleRecencyTruncation(messages: ChatMessage[], existingSummary?: string): ContextWindow {

const availableBudget = this.getAvailableBudget();

let currentTokens = 0;

const selectedMessages: ChatMessage[] = [];

// Account for summary tokens if we have one

const summaryTokens = this.getSummaryTokens(existingSummary);

currentTokens += summaryTokens;

// Work backwards from most recent, adding messages while under budget

for (let i = messages.length - 1; i >= 0; i--) {

const message = messages[i];

const messageTokens = this.getMessageTokens(message);

if (currentTokens + messageTokens <= availableBudget) {

selectedMessages.push(message);

currentTokens += messageTokens;

} else {

break;

}

}

selectedMessages.reverse();

return {

originalMessages: messages,

messages: selectedMessages,

summary: existingSummary || '',

totalTokens: currentTokens + this.config.systemPromptTokens,

truncatedCount: messages.length - selectedMessages.length,

};

}The fallback is triggered in two scenarios.

- If budget enforcement determines that even the summary plus the new message alone exceeds the available budget, it immediately falls back rather than attempting summarization.

- If any error occurs during the aforementioned optimization path, the catch block uses cached data when available and falls back to simple truncation.

This ensures that even in failure scenarios, the user gets a response based on the most recent conversation context, maintaining a functional experience even when the sophisticated optimization path fails.

Conclusion

Building BittsAI's ContextManager showed that managing finite LLM context is a systems problem, not just prompt engineering. By treating context as a budget, counting tokens, prioritizing recent messages, and incrementally summarizing older content, the system keeps conversations coherent even as they grow to hundreds of messages. The key insight is enforcing the budget first, then summarizing what doesn't fit, ensuring nothing is lost. Combined with careful persistence logic that prevents drift, the system degrades gracefully even when things go wrong.

Choosing summarization over RAG prioritized simplicity and fit. For conversational learning apps, maintaining narrative flow matters more than retrieving specific facts verbatim. The result is a system that's easier to maintain, cheaper to run, and fast enough for real-time chat without the operational overhead of vector databases. For anyone building LLM-powered applications, context management isn't optional. Whether you choose summarization, RAG, or a hybrid approach, having a strategy for managing finite context windows is essential for building production-ready AI applications.